Enhancing Lead Scoring in Sales with LLMs: A Comprehensive Guide for 2026

Learn how LLMs optimize lead scoring with smarter intent signals, faster qualification, and real results. A practical 2026 guide for sales teams.

Most lead scoring models are running on logic that hasn't been updated since someone configured them in the CRM two or three years ago. Rigid rules, surface-level firmographics, and manual point assignments — while buyers have completely changed how they research, engage, and signal intent.

Large language models (LLMs) are opening a new chapter for lead scoring optimization. By processing unstructured data that traditional models can't touch — emails, call transcripts, chat messages, social interactions — LLMs identify buying signals that rules-based and even predictive scoring miss entirely. This guide covers what's broken with legacy scoring, how LLMs work in this context, and exactly how to integrate them into your sales workflow.

Why Does Lead Scoring Matter for Sales Teams?

Lead scoring is how sales teams decide who to call first. It's the process of ranking prospects by their likelihood to convert, using a mix of demographic fit, behavioral signals, and engagement data. When scoring works, reps focus on high-intent accounts. When it doesn't, they burn hours chasing leads that were never going to close.

The impact is direct: better scoring means faster pipeline velocity, higher conversion rates, and reps who spend their time selling — not sorting.

How Has Lead Scoring Worked Until Now?

Traditional scoring falls into two camps:

- Rule-based scoring: Marketing or ops manually assigns points. Downloaded a whitepaper? +10. VP title? +15. Gmail address? -5. These rules are static and rarely revisited.

- Predictive scoring: Machine learning models analyze historical conversion data to assign scores automatically. Better than rules, but still limited to structured fields — job title, company size, industry, page visits.

Both approaches share the same blind spot: they ignore the richest signals prospects are sending. The language a buyer uses in a reply email, the questions they ask during a discovery call, the way they describe their pain in a form field — none of that enters a traditional scoring model.

That's the gap LLMs close.

What Are LLMs and How Do They Improve Lead Scoring?

A large language model is an AI system trained on massive text data to understand and generate human language. GPT-4, Claude, and similar models are the most recognized examples. Unlike traditional ML models that require structured inputs (numbers, categories, booleans), LLMs process natural language — the messy, nuanced way people actually communicate.

How Are LLMs Relevant to Processing Sales Data?

For sales teams, LLMs unlock an entirely new layer of lead intelligence:

- Email and reply analysis: An LLM reads a prospect's reply and determines whether they're expressing genuine interest, polite deflection, or a specific objection — then adjusts the lead score accordingly.

- Call transcript scoring: After a discovery call, the model assesses buying intent based on language, questions, and objections — not just whether the call happened.

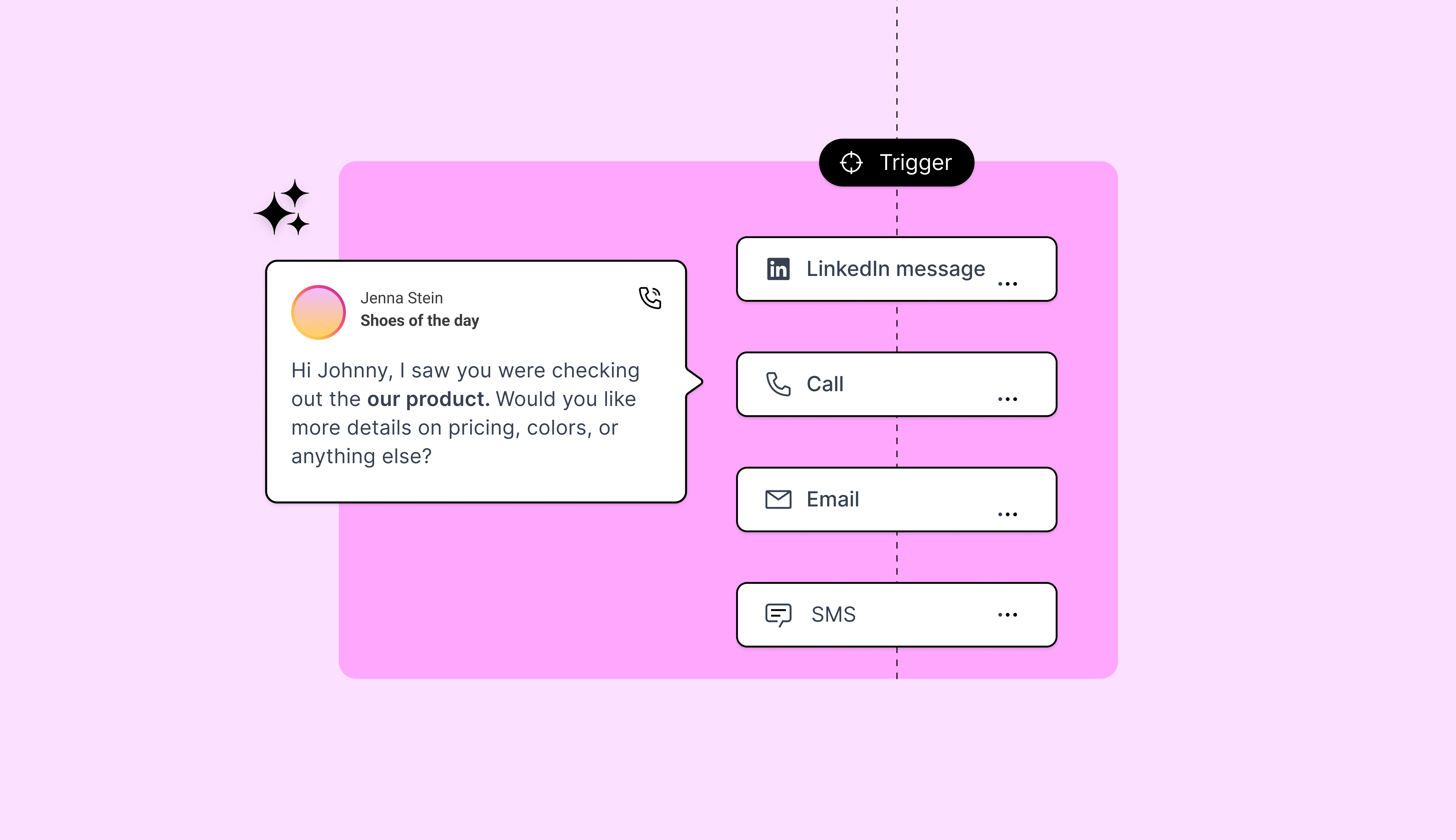

- Multi-channel signal synthesis: LLMs combine inputs from email threads, LinkedIn messages, chatbot conversations, and form submissions into a single contextualized score.

- Intent beyond keywords: Traditional intent tools flag keyword matches. LLMs understand context. There's a meaningful difference between a prospect searching "what is an AI SDR" (early research) and one asking "how to integrate an AI SDR into our CRM" (active evaluation). LLMs catch that distinction.

The result is a score that reflects what a buyer is actually saying and doing — not just which boxes they check.

What Does This Look Like in Practice?

monday.com's SDR team was drowning in inbound sign-ups while simultaneously trying to prospect new logos. Hiring more reps would blow up costs. Slowing response times meant lost deals.

They deployed Alta's AI agents across the funnel: the inbound agent called and qualified every sign-up in under 5 minutes — gathering deal context, asking discovery questions, and booking meetings automatically. The AI SDR handled always-on outbound, sending personalized LinkedIn connection requests and follow-ups using each AE's profile. Enterprise-grade prospects were routed live to the owning AE; everyone else could self-serve.

The results:

- Outbound meetings per month: 120 → 180 (50% increase)

- Time saved per Enterprise AE: 14 hours per week

- Speed-to-lead: 17 minutes → under 5 minutes

- SDR headcount added: zero

monday.com's SDR leadership described the agents as an extension of the team — booking meetings faster than a human SDR and giving each AE back half a day every week.

How Can You Implement LLMs in Your Lead Scoring Workflow?

You don't need to rebuild your sales stack. Most teams can layer LLM-based scoring on top of their existing CRM. Here's a step-by-step approach:

Step 1: Audit Your Current Scoring Model

Document what your current model actually scores — and what it misses. Most teams discover that 80%+ of their criteria are firmographic, with minimal behavioral or language-based signals. That gap is your opportunity.

Step 2: Identify Your Richest Unstructured Data

Look for text-heavy data your team already generates but doesn't score:

- Email replies and threads

- Call recordings and transcripts

- Chat and chatbot conversations

- Demo request form free-text fields

- LinkedIn message exchanges

These are the inputs LLMs are built for.

Step 3: Choose Your Integration Path

Three common approaches:

- API-based enrichment: Send text data to an LLM via API, receive a structured intent classification, and write it back to your CRM. Works well with some technical resources.

- Platform-native AI: Some AI sales platforms include LLM-powered scoring as a built-in feature. Alta's agents, for example, process language signals across inbound and outbound automatically and feed them into lead prioritization — no API work required.

- Hybrid: Keep your existing predictive scoring as a baseline and layer LLM-derived signals on top as score modifiers. Low risk, immediate impact.

Step 4: Define What the LLM Should Evaluate

Focus LLM analysis on the moments that matter most:

- First reply to outbound outreach

- Demo request or contact form submissions

- Post-demo follow-up emails

- Objection language during calls

- Re-engagement signals from dormant leads

Step 5: Test, Calibrate, and Iterate

Run LLM-enhanced scoring alongside your existing model for 30–60 days. Compare which leads each model prioritizes and track which ones actually convert. Adjust weighting based on real outcomes, not assumptions.

monday.com's results didn't come from a single configuration. The system learned — which inbound signals predicted real Enterprise deals, which outbound messaging drove replies, and which prospects needed a live hand-off versus self-serve. That feedback loop is what separates LLM-powered scoring from a static model.

5 Key Indicators to Track When Using LLMs for Lead Scoring

Once LLM-based scoring is running, monitor these five metrics to know whether it's working:

- Intent language accuracy: Are the LLM's intent classifications (high interest, objection, neutral) matching what reps see on actual calls? Spot-check weekly.

- Score-to-meeting conversion rate: Do higher-scored leads convert to meetings at a meaningfully higher rate? If the gap isn't clear, the model needs tuning.

- Rep trust and adoption: Are reps actually using the scores to prioritize? The best model doesn't matter if nobody looks at it.

- False positive rate: How often does a high-scored lead turn out to be unqualified? LLMs reduce false positives by reading context, but track this explicitly.

- Time-to-first-touch on high-intent leads: LLMs surface hot leads faster than batch processing. Measure whether response time improves — leads contacted within 5 minutes are 21x more likely to convert. monday.com cut their speed-to-lead from 17 minutes to under 5, which directly correlated with meeting volume jumping 50%.

Start Scoring What Actually Matters

Lead scoring has been a known problem with a known set of mediocre solutions for years. LLMs change the equation by making it possible to score what matters most — the language, intent, and context behind every buyer interaction.

The teams that adopt this early won't just score more accurately. They'll respond faster, prioritize better, and close more of the pipeline they already have.

Alta's AI agents process language signals across email, LinkedIn, calls, and chat to prioritize the right leads at the right time. See what that looks like with your data — book a demo.

Frequently Asked Questions

Lead scoring is a methodology for ranking prospects by their likelihood to become customers. Sales and marketing teams assign scores based on demographic fit, behavioral signals, and engagement data to decide where to focus rep time. The goal is prioritization — spending hours on leads most likely to close, rather than treating every inquiry the same way.

LLMs optimize lead scoring by analyzing unstructured data that traditional models can't process — emails, transcripts, chat logs, and form responses. Instead of relying on firmographic fields and page views alone, LLMs evaluate the actual language a prospect uses to assess intent, urgency, and fit. This produces scores grounded in real buying behavior, not just checkbox criteria.

AI in sales extends well beyond scoring. AI sales agents automate outbound prospecting, personalize messaging at scale, handle inbound qualification in real time, and coordinate multi-channel outreach — while feeding data back into scoring models. The compound effect: a sales motion that gets smarter with every interaction. monday.com saw outbound meetings jump from 120 to 180 per month and saved 14 hours per Enterprise AE per week after deploying Alta's AI agents — with no additional headcount.

The most common path is API-based enrichment: send unstructured text (email replies, call transcripts) to an LLM, receive a structured intent score or classification, and write it back to your CRM as a field. Some platforms handle this natively — Alta's agents process language signals and sync scoring data to Salesforce and HubSpot automatically. Either way, the CRM stays your system of record. The LLM adds an intelligence layer on top.

In longer, multi-touch sales cycles, scoring should weight engagement depth over single actions. Track how prospects interact across channels over time, pay attention to the language they use around pain points, and prioritize leads showing repeat engagement with high-intent content — pricing pages, comparison guides, demo requests. LLMs are especially valuable here because they synthesize signals across an entire buying journey that rules-based models miss.